Profs Angela Misri & Sheena Rossiter (MacEwan University) continue to collaborate this multi-phase research project that examines how AI voice cloning technologies may reshape the production, ethics, and audience trust dynamics of news podcasts.

News podcasts occupy a distinctive space within the broader podcast ecosystem. Unlike entertainment-driven formats, they operate within the professional norms and ethical frameworks of journalism: verification, attribution, accountability, and public service. For many audiences, they function as daily news briefings, comparable to print, radio, or television news, but delivered through a more intimate, voice-driven medium.

Because voice is central to credibility, authenticity, and trust, the introduction of synthetic or cloned voices raises pressing questions:

- How might AI-generated voices alter perceptions of journalistic authenticity?

- What are the legal and ethical implications of cloning a journalist’s voice?

- How should news organizations disclose the use of synthetic audio?

- Does AI voice use enhance accessibility and efficiency or does it risk undermining trust?

This project combines audience research, newsroom interviews, legal analysis, and experimental design to explore how AI voice cloning may influence journalistic authority, labour practices, and the evolving soundscape of news.

In news podcasting, the journalist’s voice is both an instrument of information and an emotional performance that cultivates identification and moral connection with the listener.

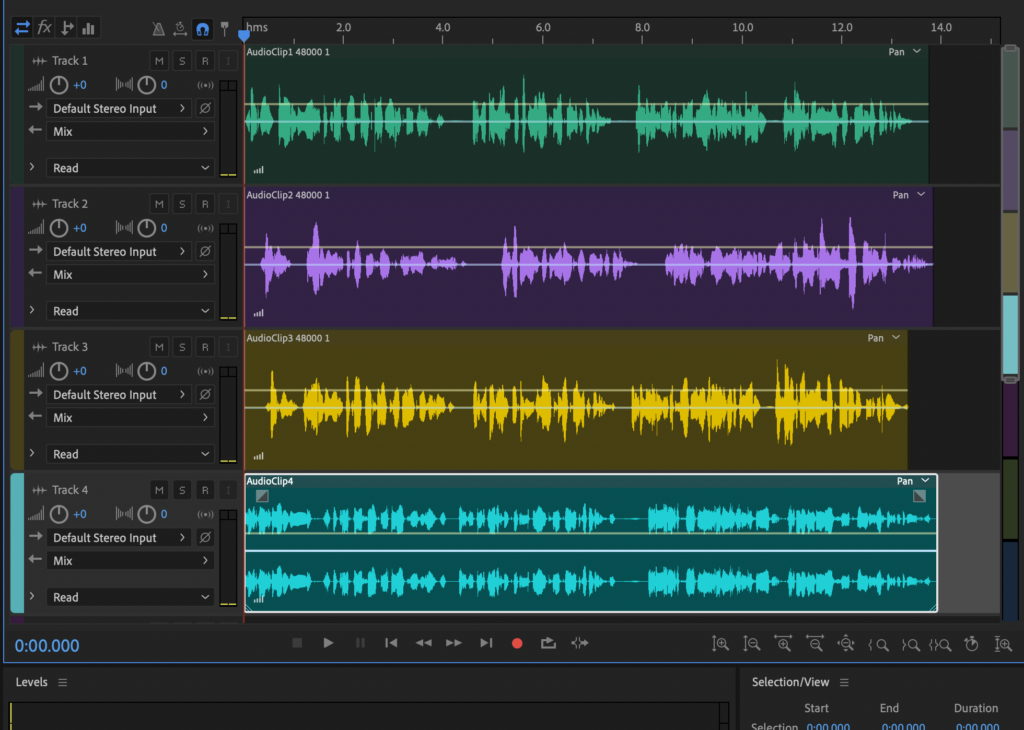

In the first phase of this research, Profs. Misri and Rossiter replicated their own voices using three commercially available AI voice cloning tools, aided by RA Dima Mironov. The goal was to assess how closely synthetic outputs approximated their natural speech patterns.

The cloned audio was evaluated against the original recordings using three primary measures:

- Word Error Rate (WER) to assess transcription and articulation accuracy

- Prosodic analysis to examine rhythm, stress, and intonation patterns

- Emotional conveyance to evaluate how effectively tone and affect were reproduced

This phase establishes a technical baseline for understanding how convincingly AI voice cloning can replicate journalistic speech — a critical step before assessing audience perception and ethical implications.

The findings from this research were presented by Profs Misri & Rossiter at the 2025 Canadian Communications Conference and the 2025 Gen AI & Creative Practice conference.

We have also submitted the paper for publication in an academic journal and are waiting to hear back from the editors/reviewers.

In the second phase of the project, we wanted to examine how audiences respond to AI-cloned voices in a news context. Following approval from the TMU Research Ethics Board, Prof. Misri partnered with Leger polling to conduct a national survey of 112 Canadian news podcast listeners.

Participants were asked to listen to paired audio samples — one human, one AI-cloned — and indicate whether they could distinguish between them. The survey then gathered more granular responses related to perceived authenticity, trustworthiness, and the role of transparency in shaping audience reactions.

This phase shifts the focus from technical replication to relational impact: not simply whether AI voices can sound human, but whether they are heard as credible within journalistic contexts.

The findings from this research were also presented at the 2025 Gen AI & Creative Practice conference and are now the first draft of a chapter in an upcoming book.

In the third phase of the project, Profs. Misri and Rossiter plan to produce an experimental news podcast that intentionally incorporates AI tools at multiple stages of the production process — including scripting assistance, voice synthesis, editing, and post-production workflows.

The goal is twofold: to experiment with the technology in real time within a journalistic framework, and to increase public literacy about what these tools can (and cannot) do. By making the use of AI visible and transparent within the production itself, this phase will explore how disclosure, context, and editorial intent shape audience understanding and trust.

This practice-based component extends the project beyond analysis into applied design, testing how AI might be integrated ethically into news production rather than simply theorized from the outside.

FURTHER readings:

The changing shape and new economics of news podcasting: From listening to watching, from podcasts to shows – Reuters Institute

AI voice cloning: how a Bollywood veteran set a legal precedent – WIPO

He spent decades perfecting his voice. Now he says Google stole it. – Washington Post